An AI receptionist looks magical from the outside. Pick up the phone, talk to a friendly Kiwi or Aussie voice, get your booking, hang up. Done.

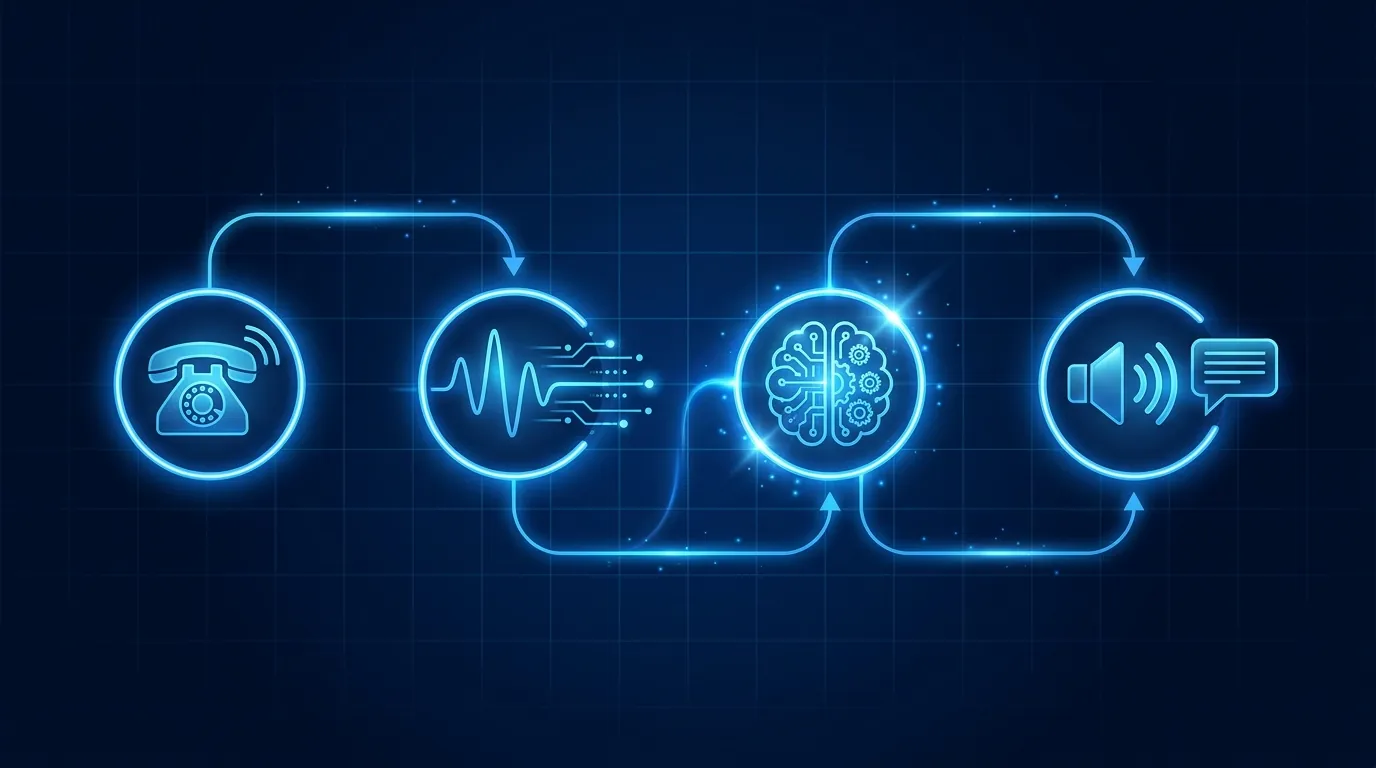

What is happening under the bonnet is four stages running in a tight loop, end to end, in under one second. Here is each one in plain English.

Stage 1: telephony

When a caller dials your number, the call hits a phone provider first. We use Twilio in Australasia. Most platforms in 2026 use Twilio, Vonage, or Telnyx under the hood.

The phone provider answers, then routes the audio stream to the AI agent over a realtime connection. The whole route takes about 100-200ms in NZ and AU.

The phone provider is also where data residency lives. If your audio needs to stay onshore, the provider is the layer that enforces it. Most enterprise deployments use a regional Twilio data centre (Sydney for AU, no NZ data centre yet, so NZ traffic typically routes through Sydney as well).

Stage 2: speech to text

The audio stream from the phone provider goes to a speech-to-text engine. The most common engines in 2026 are Deepgram, AssemblyAI, OpenAI Whisper, and Google Cloud Speech.

The STT engine listens to the caller's audio and produces a running text transcript, typically 200ms behind the actual audio. So when the caller says "I would like to book an appointment for next Tuesday at 2pm", the text appears in the system roughly a fifth of a second later.

A good STT engine handles NZ and Australian accents without breaking. It also handles cross-talk (when the caller and agent talk at the same time) and end-of-turn detection (working out when the caller has finished their sentence).

End-of-turn detection is harder than it sounds. Pause too short and the agent interrupts. Pause too long and the conversation feels stilted. The platforms that hold sub 800ms reliably have spent serious engineering on this layer.

Stage 3: the language model

Once the caller's transcript is in, the language model takes over. This is the brain.

The model reads:

Then it decides what to say back.

The choice of model matters more than people realise. We compared the major options in our piece on the best LLM for voice agents. The short version: GPT-4.1-mini for default scripted work, Claude Haiku 4.5 for compliance-heavy or rule-dense agents, GPT-4.1 standard for long branching conversations.

The model also calls functions when needed. We cover function calls below.

Stage 4: text to speech

The model's text reply goes to a text-to-speech engine. The major players in 2026 are ElevenLabs, OpenAI Realtime, Cartesia, and Google.

The TTS engine speaks the text in your chosen voice. Modern voices in 2026 are indistinguishable from a human voice on a phone line in over 80% of blind tests. The giveaway used to be unnatural pauses and intonation. That is mostly solved.

You pick the voice during setup. We offer a Kiwi voice on NZ deployments and an Australian voice on AU deployments. Both sound like a real person on the other end of a phone line.

The 800ms latency budget

Human conversational turn-taking sits at 200-400ms. That is the natural pause between "how are you?" and "good thanks". You have been tuning your ear to that rhythm since you could speak.

Drift past 500ms and the conversation feels off. Drift past 800ms and your caller starts wondering if something is broken. Past one second and they have stopped listening.

Total turn time is the sum of all four stages plus network round-trip. A good platform sits at 600-800ms total. We covered the engineering of holding that budget in mastering voice AI latency.

If your AI agent sounds slow, the model is usually not the first thing to blame. The fix is in the rest of the stack. We covered the diagnostic in your AI agent sounds slow, the AI is not the problem.

Functions: how the agent actually does things

The four stages above let the agent talk. But talking is not enough. To book a meeting, the agent has to actually create an event in your calendar.

This is what function calling does.

The model is wired with a list of functions it is allowed to call. Examples on a typical receptionist:

The model decides when to call each function. The platform runs the function. The result comes back to the model. The model continues the conversation.

This is how a single AI receptionist can hold a real conversation, book a real appointment in Google Calendar, send a real confirmation SMS, and warm-transfer a real call to a human in your team. All inside one phone call. All in under 60 seconds.

Frequently asked questions

Does it learn over time?

Indirectly. The base model does not retrain on your calls (that would be a privacy nightmare). Your knowledge base and prompt do get tuned weekly based on review of edge cases. So the agent gets sharper, even if the underlying model is fixed.

What happens if the model has a brain freeze?

Modern voice platforms have fallback handling. If the model takes too long, the platform fills with a natural filler ("let me check that for you") to buy time. If the model returns nothing useful, the platform escalates to a warm transfer.

What language can it speak?

Most TTS engines support 30+ languages in 2026. The model layer supports most of those at high quality. The bottleneck is usually the STT engine on rare accents.

How private is the call data?

Audio gets stored if you want recordings. Transcripts get stored for review and quality control. Both get encrypted at rest. Most platforms allow per-call opt-out and a hard delete on request.

Can two AI agents talk to each other?

Technically yes. We have not seen a useful production case yet. The most common A2A scenario is one agent transferring to another as a routing step, which is mostly a function call rather than a real conversation.

What hardware does it run on?

Cloud GPUs. The model layer runs on Anthropic, OpenAI, or Google data centres. Inference on a single call uses a tiny fraction of one GPU's capacity. The cost per minute reflects fractional GPU utilisation, not dedicated hardware.

See it running

Live demo agents you can call now, tuned for NZ and Australian businesses.

Leonardo Garcia-Curtis

Founder & CEO at Waboom AI. Building voice AI agents that convert.

Ready to Build Your AI Voice Agent?

Let's discuss how Waboom AI can help automate your customer conversations.

Book a Free Demo